I rewrote my blog system with .NET Core last year. After more than a year of optimization, the server response time is now 8ms comparing to 80ms when it first went live. This article shares what I have done to make my blog works really fast.

In fact, before .NET Core, my old blog system was written by .NET Framework, built from ASP.NET Web Form 2.0 in 2008 all the way up to ASP.NET MVC5 in 2018. Today, .NET Core is essentially a great performance boost over the .NET Framework, killed NodeJs, Java in many tests. But In fact, it doesn't make much sense just to look at the benchmark, and most of the performance problems in our applications are not caused by the language and framework we choose, but the poor design, the wrong frame usage. I've also learned a lot from the practice of .NET Core, so I've written this article and shared my own performance optimization experience.

No Silver Bullet

First of all, each system is different. Performance optimization methods depend on different business scenarios, different application areas, different user group, there is no universal approach. For example, my blog is a content site, it has less interaction, but a lot of situations for reading data, so I want to improve the data reading speed. Some systems, such as e-commerce sites, have far more complex business logic than content sites, and even extreme situations such as "seckill", clearly, reading data aren't the major operations for these systems. For example, in China, Alibaba will tell you "don't use foreign keys on tables", this is because Ali's business pressure is extremely high, they need extreme writing speed, it is so extreme that foreign keys become a blocker that can impact performance. Obviously my blog and many content sites do not have this kind of scenario, so we can still use foreign keys as a good database practice.

So, before you read the rest of this article. You must understand that there is no silver bullet in software design. The experiences I have listed are only for my own blog. Most of the experience can be applied to similar content sites, but do not practice them blindly to any applications. Even for content sites, the target users and the server pressure is not the same, my blog certainly can not compete with CNN, BBC, etc, so the key points and methods of optimization are also very different. Keep that in mind.

Analysis and Discover Key Points

Although we have some prejudgment when we design the system, such as which features are most commonly used by the user and which requests are most frequent. But the user's real behavior is the truth after the launch, and sometimes the system's performance will be different from our expectations. Moreover, over time, the user's usage habits may change and the modules of the system that is under pressure may change as well. Therefore, we need to record and analyze the data and user behavior generated by the system during actual use.

The Azure Application Insights I'm using is a great APM tool to help me collect and analyze the usage data. As a website, performance is determined by the service side (back-end) and the client (front-end), Azure Application Insights can simultaneously collect the back-end API processing speed, database query speed, front-end page resource load speed, JS execution speed, etc. It also automatically analyzes which of the slowest requests is, where are the system's most time-consuming operations (front-end, program, or database), and even Azure SQL Database automatically recommends optimization scenarios based on actual usage (e.g. where and what to index, etc.). The use of APM tools is not discussed in this article. However, when performing performance optimization, it is necessary to analyze and then identify where optimization is needed for the actual user's data. A few months after my blog came online, my analysis is as follows:

- Client performance overhead in loading resources and excessive requests (front-end library, blog post images)

- Server performance overhead in too many duplicate SQL queries

- Performance overhead by reading from Azure Blob Storage and returning to the front end (double the cost)

Front-End Practices

Do we really need to put JS at the end of <body>?

It's also a principle that almost every web programmers know about. If you put the JS resource at the end tag of your page, that is, before the </body> tag, the browser will load your JS asynchronously. If JS resources are traditionally placed in the <head> section, the browser must load the JS resources before rendering the page.

But today, is it still true? Some of you may know, in today's browser, this rule isn't that correct anymore. First of all, we can add defer tag to tell the browser, when encountering this JS, do not wait for it to load, just continue rendering the page.

<script defer src="996.js"></script>

<script defer src="007.js"></script>However, defer scripts are still executed sequentially, which is important for dependent JS resources, such as the above code, even if 007.js is very small and loaded first, it must wait until the 996.js load is complete before it can be executed. If your JS libs don't depend on each other, just change defer to async, in that way, who loaded first get executed first, it won't wait for the previous one to finish.

Unfortunately, because I can't control the browser type and version of the end-users, according to the statistics of Azure Application Insights, there are still many people who use low version browsers to access my website and their browsers do not know about defer and async.

So at present, the practice of my blog is still trying to put JS at the end of the page, but it is not absolutely true! Because the framework JS files have to be loaded first to render the pages correctly, they're still in the head section in my blog, but for user code, I'll put them at the end of the body.

<html>

<head>

...

<script src="~/js/app/app-js-bundle.min.js" asp-append-version="true"></script>

...

</head>

<body>

...

@RenderSection("scripts", false)

</body>

</html>If your site doesn't have a low client browser version, then try to use defer and async.

Use HTTP/2

According to https://w3techs.com/technologies/details/ce-http2, as of December 2019, approximately 42.6% of the world's websites had upgraded to HTTP/2. It can improve network performance in several areas (from Wikipedia):

Decrease latency to improve page load speed in web browsers by considering:

- data compression of HTTP headers

- HTTP/2 Server Push

- pipelining of requests

- fixing the head-of-line blocking problem in HTTP 1.x

- multiplexing multiple requests over a single TCP connection

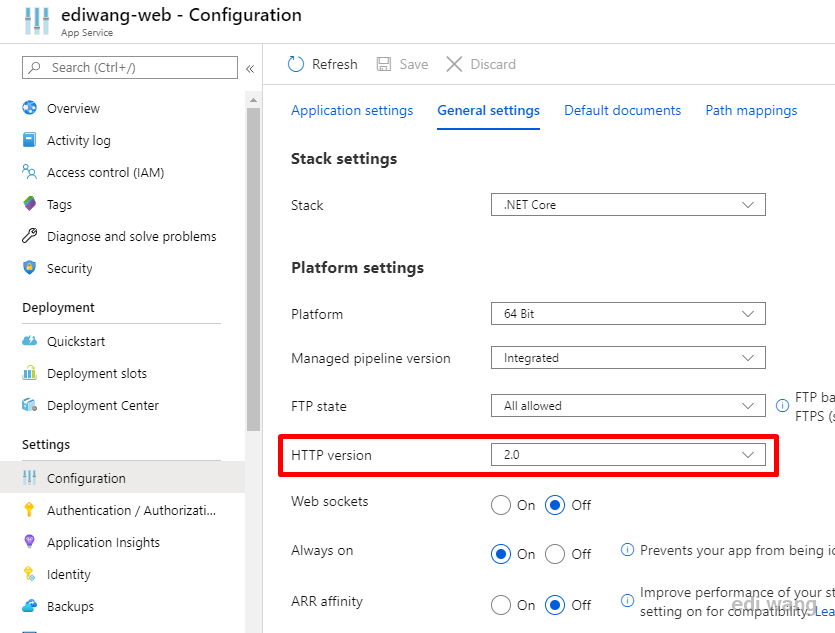

My blog is hosted by Azure App Services, which can enable HTTP/2 within a few mouse clicks.

If you don't use Azure, don't worry, the latest version of .NET Core 3.1 has HTTP/2 on by default:

Enable Compression

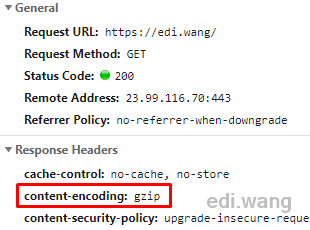

Turning on server-side response compression reduces the volume of resource transfers, thus achieving the goal of improving performance. With ASP.NET Core websites deployed on Azure, it turns on gzip by default.

If you are not using Azure, you can enable GZIP on IIS according to this link. If you are not using IIS, don't worry, .NET Core can enable GZIP itself: https://docs.microsoft.com/en-us/aspnet/core/performance/response-compression?view=aspnetcore-3.1

Why not SPA?

Since 2014, the rise of SPA frameworks such as Angular have gradually become the mainstream of front-end development. The problem they solve is to improve the responsiveness of the front end and bring Web applications as close as possible to the local native app experience. I have also met a lot of friends who have questions, why my blog does not use Angular? Do I have Angular skills or not?

It's not that simple. In fact, my current job in the company is actually writing Angular, not C# (interesting?). My blog had once used AngularJs and Angular2 a few years ago, after a series of practice, I found the benefits of using Angular are not so much for a content site like my blog.

In fact, it is not surprising. Before blindly selecting a framework, we have to pay attention to a prerequisite: the SPA framework is aimed at Web applications. What do applications mean? They are heavily interactive sites like the Azure portal or Outlook web, their goal is to make websites look and use just like a native program installed on your system. For these kinds of scenarios, using an SPA framework can enhance user experience while lower the development efforts. But a blog is a content site, not an application. The only interaction in a blog for the user is comments and searches, so SPA is not suitable for this job. It's like you're going to the market to buy food, and it's more convenient to ride a bike than drive a tank.

Therefore, we must keep in mind that what popular today may not necessarily be suitable for all projects!

For reference, there is an article on Microsoft docs: Choose Between Traditional Web Apps and Single Page Apps (SPAs)

"You should use traditional web applications when:

-

Your application's client-side requirements are simple or even read-only.

-

Your application needs to function in browsers without JavaScript support.

-

Your team is unfamiliar with JavaScript or TypeScript development techniques.

You should use a SPA when:

-

Your application must expose a rich user interface with many features.

-

Your team is familiar with JavaScript and/or TypeScript development.

-

Your application must already expose an API for other (internal or public) clients."

Back-end Practices

Try to avoid Exceptions

. NET Exception is a special type that, regardless of whether the user code handles exception or not, will have overhead on the CLR as long as it is generated. So try to avoid creating Exception, especially not to use Exception to control the process of the program, which is usually mentioned in .NET's technical articles. Here's an example of wrong use of Exception I've seen in my company's code that wants to determine whether the input is a number:

try

{

Convert.ToInt32(userInput);

return true;

}

catch (Exception ex)

{

return false;

}But in fact, it should be:

int.TryParse(userInput);But another point about Exception is whether you need to design your own Exception type for business errors? Returning Error Code or throwing Exception? I have no definite conclusion on this point. My current practice is that, throw exceptions for invalid parameters, but return error instead of exceptions when a business rule is wrong. For example, I don't design a PostNotFoundException type to describe a blog post being deleted. This is because there could be a large number of requests coming from automatic scanning tools requesting non-exist articles on my blog. If I design a 404 scenario as exceptions, CLR will be soon blow up by exceptions.

Refer to: https://devblogs.microsoft.com/cbrumme/the-exception-model/

AsNoTracking() for Entity Framework

Each. NET groups can fight for the Entity Framework vs Dapper for a day. In fact, although EF has limitations in many scenarios, it's not that bad. The only problem is in order to use it right and avoid performance problems, you need to put a lot of learning efforts for EF. The most common scenario is when encounter read-only data, you can use AsNoTracking() to tell EF to stop tracking these objects in order to save memory and enhance performance. This is exactly the case for my blog. Most operations are only for reading data, the data don't change after being read. So I set "AsNoTracking()" by default:

public IReadOnlyList<T> Get(ISpecification<T> spec, bool asNoTracking = true)

{

return asNoTracking ?

ApplySpecification(spec).AsNoTracking().ToList() :

ApplySpecification(spec).ToList();

}Plus, I've written an article in 2012 'Performance tips for Entity Framework' (sorry it was written in Chinese), it still applies to .NET Core today.

And for Entity Framework Core 3.x, Microsoft banned client-side evaluation by default to prevent a lot of performance issues caused by the wrong use of EF.

Database DTU

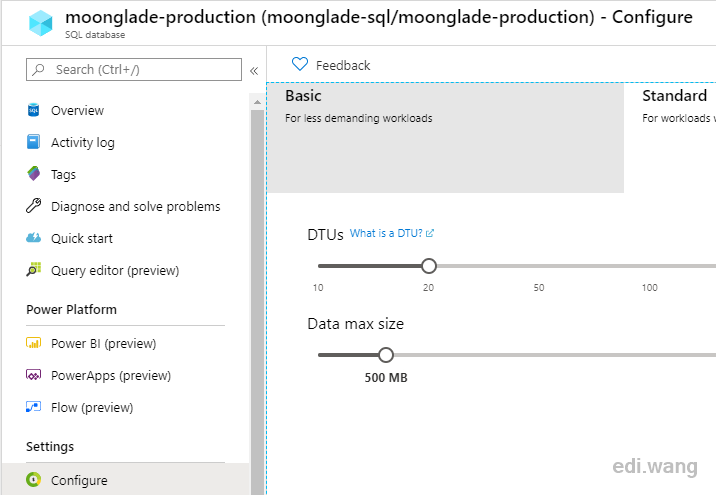

My blog uses the DTU model for Azure SQL Database. Frequent requests cause DTUs to run out, resulting in subsequent requests being queued to be performed. So the first optimization is to increase DTU capacity, currently, 20 DTU is enough.

You can check the database dashboard in Azure to see if your DTU is enough.

Memory and Cache, make fewer calls to the database

The computer's memory is for use, not for saving. Programs either sacrifice space for time or time for space. Using memory for caching, rather than calling the database every time, can improve performance over time.

In cloud environments, in particular, database calls are often the most time-consuming (Application Insights is considering these to be the dependency call). If you don't like memory cache, products such as Redis can be configured according to the needs of the project.

In my blog, the use of caches is everywhere. For example, post categories, pages, which are not updated frequently, can be cached so that you don't query the database every time a request is made. In addition, data such as configuration, it is also recommended to design as a singleton, load it once when the website starts, do not go to the database to re-read the configuration every time. This will greatly reduce the pressure on the database and improve the responsiveness of the site.

var cacheKey = $"page-{routeName.ToLower()}";

var pageResponse = await cache.GetOrCreateAsync(cacheKey, async entry =>

{

var response = await _customPageService.GetPageAsync(routeName);

return response;

});In addition to databases, local and remote images, or other types of files can also be cached to improve performance.

Sacrifice space for time

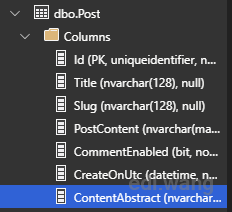

In my blog, I need to display an abstract of the content on the post list page, which is the first 400 words of the entire article. In my old .NET Framework blog, I SELECT the entire post content each time, using Substring() to intercept the first 400 words. And because EF is used, it's hard to do the substring in SQL, so it consumes a lot of time and network transfer costs.

In the .NET Core version of my blog, I adjusted the design, adding a new column to the post table dedicated to storing the first 400 words of the content abstract, which is calculated and stored to the database when writing a new post or edit an existing post, so that I don't have to SELECT the entire content when I display the list of articles.

While such a design certainly obeys the database's normalization principles, it greatly improves performance.

var spec = new PostPagingSpec(pageSize, pageIndex, categoryId);

return _postRepository.SelectAsync(spec, p => new PostListItem

{

Title = p.Title,

Slug = p.Slug,

ContentAbstract = p.ContentAbstract, // SELECT only first 400 words instead of entire content

PubDateUtc = p.PostPublish.PubDateUtc.GetValueOrDefault(),

Tags = p.PostTag.Select(pt => new Tag

{

NormalizedTagName = pt.Tag.NormalizedName,

TagName = pt.Tag.DisplayName

}).ToList()

});

This practice is also common in enterprise systems. If there are expensive calculations to get results, and the results do not change, then you do not need to calculate every time, store the result in the database to improve performance.

In my blog, subscription data such as RSS/ATOM/OPML used to go to the database every time to get the data and output to the client. However, this type of data also doesn't change normally. So I designed the data to be cached with XML files into a temporary directory. When the first user visits the endpoint, the system fetches the data from the database and store the result in the XML, so that subsequent user requests don't need to hit the database, just return the XML. Only when the content of the article is modified, my blog would then drop the cached file and let it regenerate next time when a new request is coming.

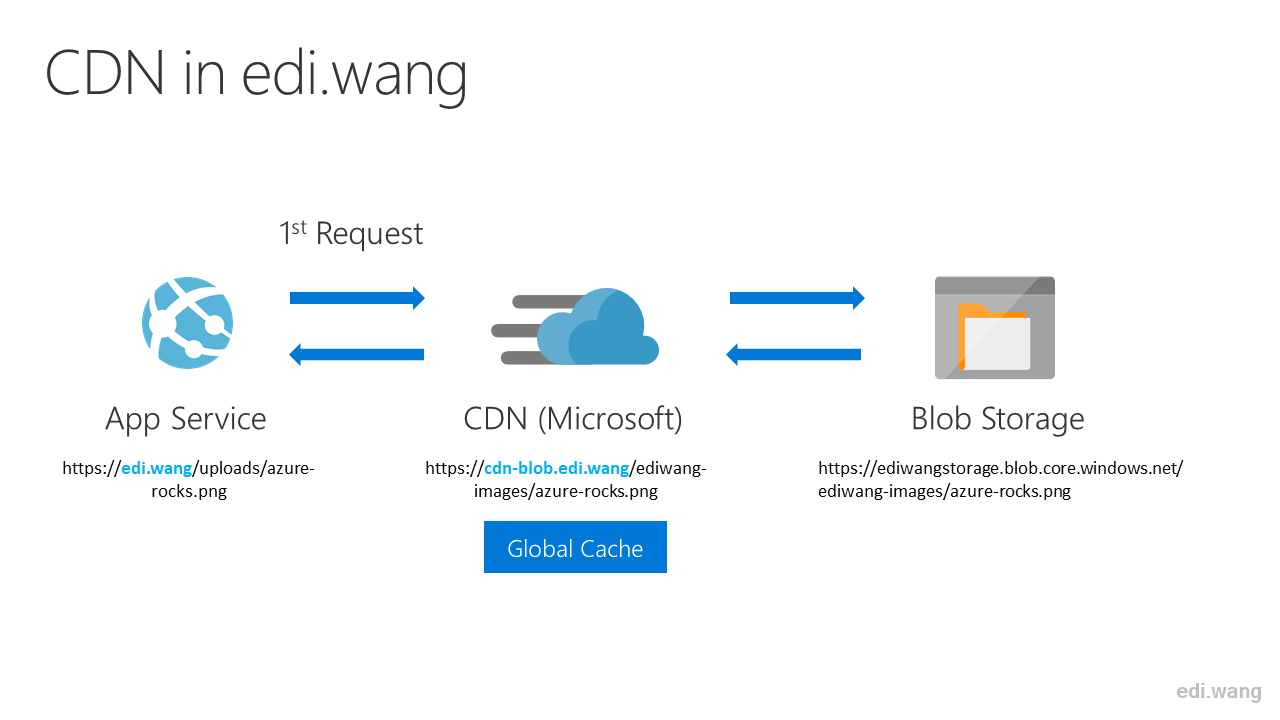

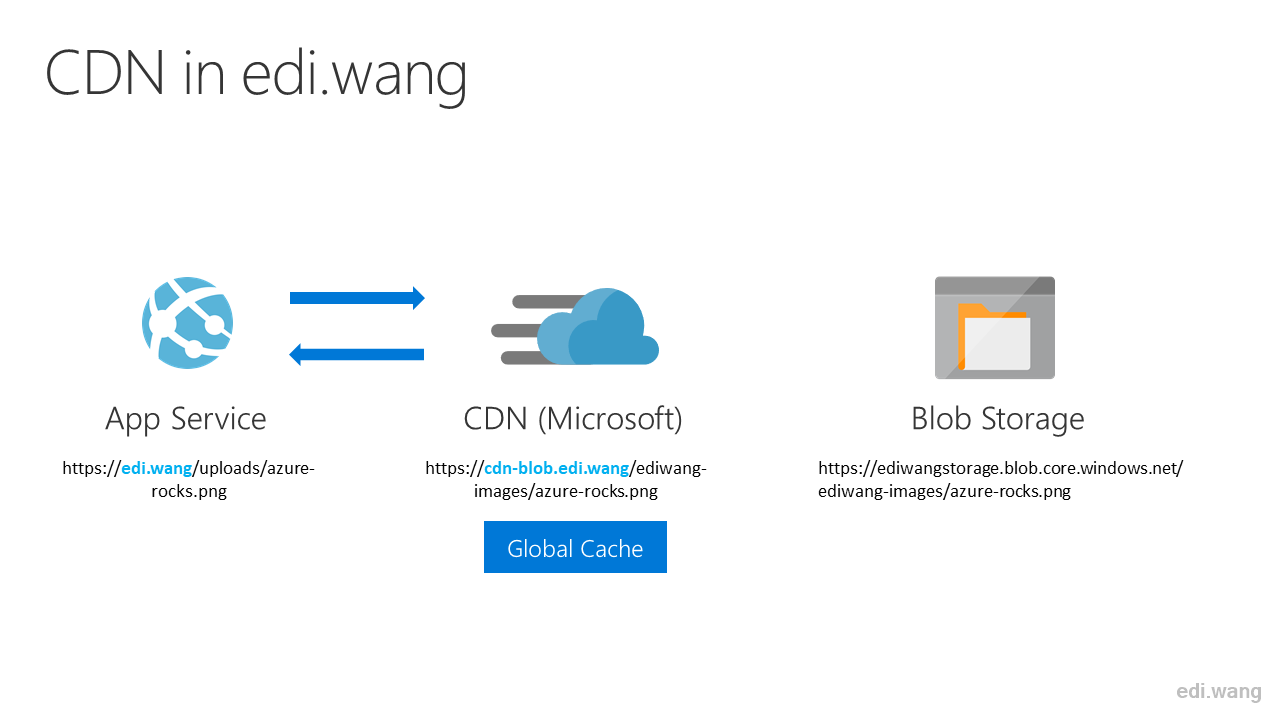

CDN

Try to use CDN for serving static resources, and configure pre-fetch to reduce the number of DNS resolution. My blog has an abstraction over image resources, it isn't just output static files to the client directly, currently the blog supports both Azure Blob and local file system as image providers. For either kind of storage, you can't avoid reading images from the corresponding location and return them to the client, it still puts a lot of pressure on the server even with MemoryCache.

I'm currently using is Azure Blob as the image provider. The previous process of reading an image file was:

- First request: The webserver goes to Azure Blob to get the image file, and the client goes to the webserver to get the image file.

- Subsequent requests: Hit memory cache, returning only the picture from the webserver to the client without fetching it again from Azure Blob.

However, even if subsequent requests do not go through Azure Blob, requests to the Web server must still exist, which is a significant overhead. So I decided to use the Azure CDN to let the image requests pass through my own webserver and access Azure Blob directly with my custom domain.

So now, the process of reading an image file is:

First request: CDN determines whether it has cached the image file, if not, go to Azure Blob and cache it.

Subsequent requests: CDN returns the cached image file to the client without fetching it again from Azure Blob.

As a result, the image requests generated by users reading a blog post only pass through the Azure CDN server and do not put pressure on the webserver.

Alternatively, you can add dns-prefetch to the web page header, pointing to the CDN server domain name:

<link rel="dns-prefetch" href="https://cdn-blob.edi.wang" />This allows the browser to resolve the address of the CDN server in advance, speeding up the loading of the web page even more.

Log level

Many programmers use the same log configuration for local and production, and on local, we usually open low-level logs such as Debug, Trace to help us develop and test. But the production environment is relatively stable releases that don't really need these low-level logs. Logging all events have a significant impact on application performance.

So my practice is that the production environment only opens the log levels above "Warning" unless I encounter a difficult problem that requires the collection of detailed trace data, I will temporarily open the Debug log for several hours.

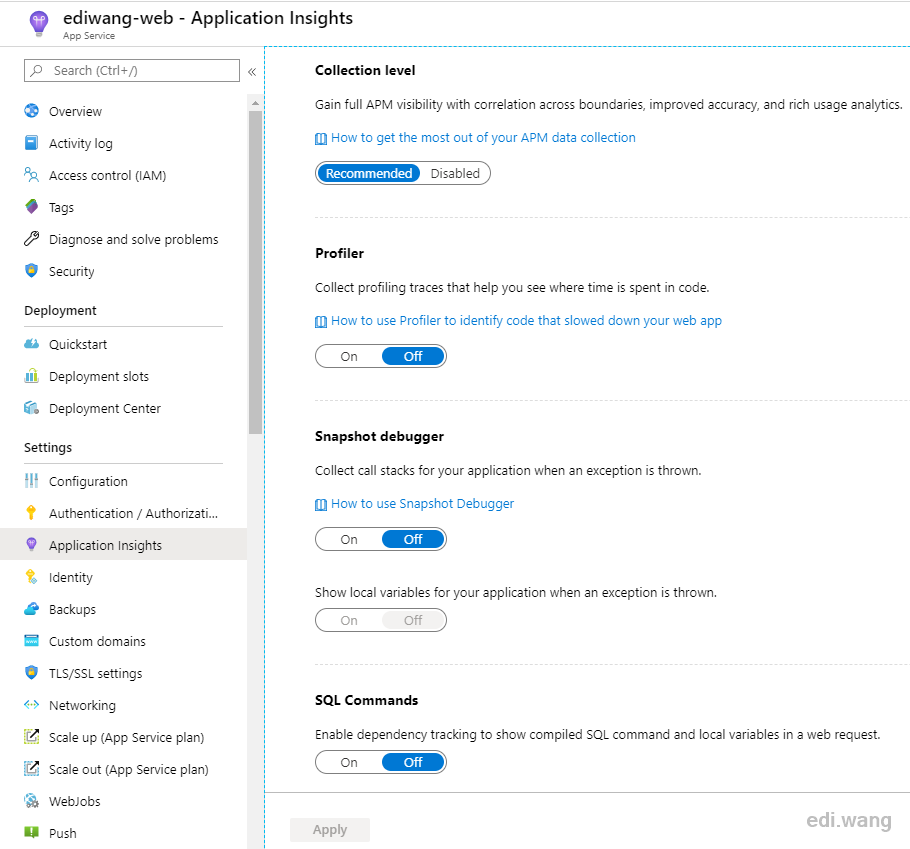

Don't enable profilers if you don't need them

Similar to above, APM tools typically provide a variety of profilers, but this generally affects performance. Even Azure's own Application Insights does that. So it is generally not recommended to turn on these profilers unless your website encountered issues that require careful investigation.

Summary

Performance optimization does not have a completely common strategy, the methods need to adjust according to different systems, different business, different pressures, the overall idea is: reduce unnecessary calls and overhead. But sometimes you also need to adjust the deployment architecture of your application, such as for Azure, you can add Traffic Manager and Front Door, or use load balancing as well.

In system design, don't always stick to the theory, such as the database normalization principles. Analyze the business situation you encounter, and make adjustments, there is no "best practice" in the world that can be perfectly replicated, only the best practice fits your business.

yang

I find a tiny bug that if I read this post on the night mode in my phone,the post time was invisible。

Slow-Mo

It's all nice and stuff but you page took 3.24 s to load on a gigabit connection in the US Northeast.